Last Wednesday was April Fool’s Day. But I hardly saw any April Fool’s pranks. When I realized that, I thought to myself, “This is a sign of the times.”

April Fool’s probably started in 1582, when much of Europe switched from the Julian to the Gregorian calendar, which moved New Year’s from April 1st to January 1st. Those that still clung to the old calendar were called April Fools.

An alternative theory comes from Spring festivals that celebrated jokes, chaos and role reversals, like the Roman Hilaria or the medieval European “Feast of Fools.”

But April Fool’s really hit its peak when Mass Media joined in the fun. It was the BBC in Britain that got the ball rolling in 1957, with their famous “Spaghetti Tree Harvest” news documentary. Thousands jammed the BBC switchboards asking how they could grow their own spaghetti trees. The April Fool’s News Story became a BBC tradition.

Other media outlets followed in the BBC’s footsteps. In 1977, that stiff-lipped stalwart of British journalism, The Guardian, published a travel supplement for “San Serriffe” – a tropical nation made up of two main islands, Upper Caisse and Lower Caisse. The leader, General Pica, had a palace in the capital city of Bodoni. Anyone with some graphic design experience would soon realize the entire 7-page special supplement was full of typography puns, but it seems the British weren’t exactly that “type” – U.K. travel agencies received several calls wanting to book trips there.

Brands thought elaborate pranks would show how hip and relevant they were and jumped on the April Fool’s bandwagon in the 1980’s and 90’s. Taco Bell “bought” the Liberty Bell in 1996 and renamed it the Taco Liberty Bell. In 1998, Burger King introduced the Left-Handed Whopper. Even Big Tech joined the party with that wacky sense of humor computer engineers are known for. In 2013 Google introduced Google Nose, a search engine of smells. It included “Wet Dog” and had a Street Sense feature.

Ironically, Google also introduced Gmail on April 1st, in 2004, blurring the line between prank and product launch. No one believed you could get a free email account with 1 GB of storage. Competitors offered 2 to 4 megabytes.

Let’s fast forward to April 1, 2026. On that day – last Wednesday – crickets. There was no ha-ha to be found. And I thought, “What a sad state the world is in when we can’t even poke fun at ourselves.”

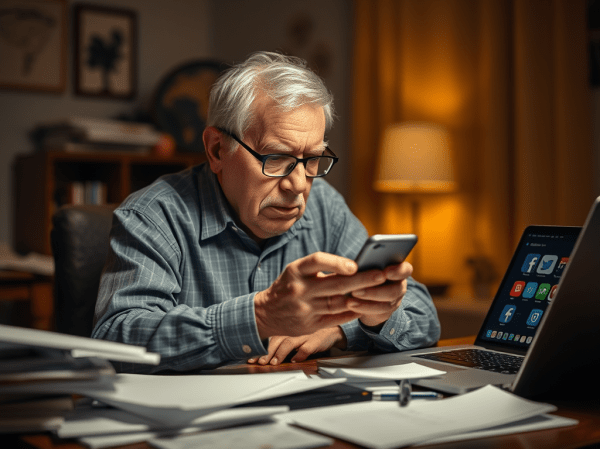

Maybe it’s because “Fake News” is now a real thing, 365 days a year, not just on April First.

Also, if you’re going to play a prank now, it’s probably going to be on social media. And how the hell can you compete with the wall-to-wall misinformation madness that fills everyone’s feed, every single day of the year.

But then I realized that attitudes towards April Fools have followed an arc directly related to how we get our information through media.

From the 1950s to the 90s, information was scarce and mass media outlets were the gate keepers. Trust was implied in the relationship, and it was that trust that was slyly mocked at on April the First. The April Fool’s prank hearkened back to the Medieval tradition of role reversal on Feast of Fools Day, when traditional hierarchies were inverted. This meant that – for one day – even the sober British media could play the fools. It was all done in a “wink wink” kind of way.

Then, in the late 90s and early 2000s, information became abundant. Those playing a prank expected to be fact checked. It was a way to drive viral traffic to online sources of information, which is why brands started to jump aboard with their own April Fool’s Pranks.

But now, in the age of misinformation and A.I. slop, every day is April Fool’s Day. Information (and misinformation) isn’t just abundant, it’s a pollutant. It’s everywhere and it’s often intentionally toxic. The very thing we used to smile about is a force that’s shattering our society.

It’s hard to laugh at that.