My fellow Media Insider Maarten Albarda called it the “The Big Tobacco Moment for Social Media” in his post last week. Then, just yesterday, Steve Rosenbaum added that the K.G.M v. Meta Platforms case “signals a shift that cuts directly through the core defense platforms have relied on for decades.”

It was a seismic decision, and I’m pretty sure the various conference rooms of 1 Meta Way, Menlo Park, California have the doors closed as a bunch of sweaty lawyers and Meta staff are rolling out the whiteboards (or the Meta Quest virtual reality equivalent) and rolling up their sleeves to assess the potential damage and draw up a battle plan. Let’s take a moment to speculate about what they may be talking about.

In at least one of those conference rooms, Meta’s legal team is assessing one line of defence, which I’ll call Project “Hail Mary,” tapping into the current pop culture Zeitgeist. This involves an appeal to the $6 million decision. It’s not this case that’s worrying them. It’s the thousands waiting in the queue for the legal precedent to be set. The Meta Legal Team will be spending much of their foreseeable future in a courtroom. Even they know that chances for a successful appeal are slim.

The second line of defence is to quantify the impact of this on Meta’s bottom line if the appeal is not successful. So let’s unpack that, because it deals with the elephant in the room, touched on in both Steve and Maarten’s post: Is this the beginning of a slippery slope that will lead to the dismantling of algorithmic ad targeting and the demise of the endless scroll for everyone, or just legal minors?

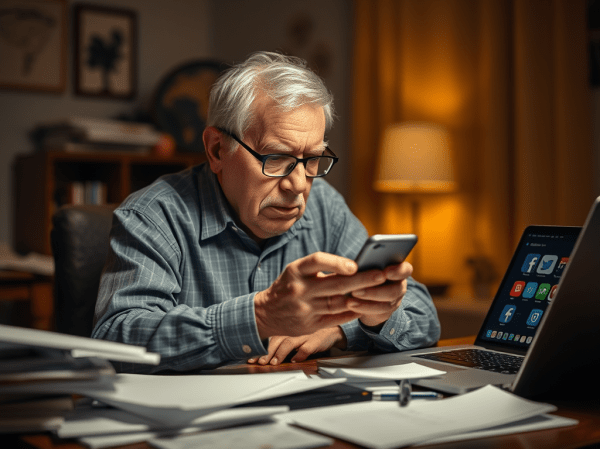

If we follow the lead of Australia, the first country to implement a ban on social media, it will just be minors – those under 16. The legislation was passed late last year and the ban officially took place on December 10, 2025.

There are several countries around the world looking at implementing a similar ban, including Canada. Most are watching to see how Australia implements and polices its ban, as there are several thorny issues at play here. The countries seriously looking at it tend to share a similar legislative sentiment with Australia when it comes to consumer rights and privacy concerns.

The U.S., under the current administration, is the least likely to implement federal restrictions on social media. Still, that is not keeping several states from introducing their own legislation. What the K.M.G. v. Meta decision does do is move the debate from the arena of federally controlled media to that of state controlled online safety, privacy and mental health concerns. All will be watching the pending suits, which will likely fill up dockets in U.S. courts for the next few years at least.

Given the international aspect of this, it’s instructive to look at how Meta’s revenues breakdown by region.

The biggest share, 39%, is the U.S. and Canada, but 94% of that comes from the U.S. We’re a Meta rounding error up here.

The Asia-Pacific is the second biggest regional market – with 26.8% of global revenues. While the user numbers are huge, the revenue per user is much smaller than in North America. Several countries in this market are considering some type of age-based restriction on social media usage – largely driven by the academic concerns of parents and educators in China, Japan and Korea.

Next is Europe, with 23.2% of Meta’s revenue pie. If there is any jurisdiction likely to follow Australia’s lead, it’s the E.U., who have consistently shown leadership in implementing privacy protection legislation.

Finally, there is the rest of the world, which collectively accounts for about 11% of Meta revenues. When you consider this includes all of Africa, all South America and whatever else is left, you can appreciate that attitudes towards legislation will be all over the map, both literally and figuratively.

Still, let’s say that a significant chunk of Meta’s revenue – say about 30 to 40% – comes from regions likely to pass legislation similar to Australia’s. Still, that undoubtedly will be only directed at minors younger than 16, which today makes up less than 10% of Meta’s user base (between Instagram and Facebook). All those young people have gone to TikTok (where it makes up 25% of their user base).

So, what Meta’s financial planners are probably talking about is the fact that – even in a worst legal case scenario – we’re talking about 3 to 4% of their total user base that may be legislatively restricted in some form or another. If you’re in triage mode, that’s not severe enough to consider major surgery or amputation. Probably a band-aid will do the trick.