The world is having a pandemic-proportioned wave of Ostrichitis.

Now, maybe you haven’t heard of Ostrichitis. But I’m willing to bet you’re showing at least some of the symptoms:

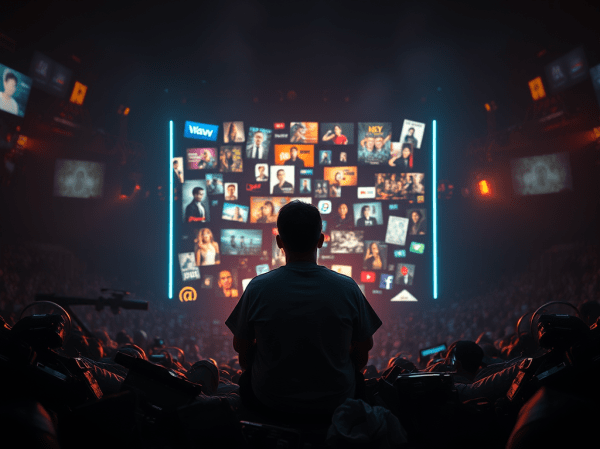

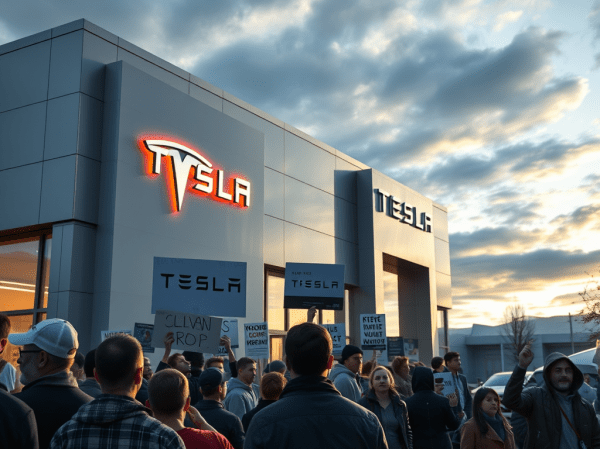

- Avoiding newscasts, especially those that feature objective and unbiased reporting

- Quickly scrolling past any online news items in your feed that look like they may be uncomfortable to read

- Dismissing out of hand information coming from unfamiliar sources

These are the signs of Ostrichitis – or the Ostrich Effect – and I have all of them. This is actually a psychological effect, more pointedly called willful ignorance, which I wrote about a few years ago. And from where I’m observing the world, we all seem to have it to one extent or another.

I don’t think this avoidance of information comes as a shock to anyone. The world is a crappy place right now. And we all seem to have gained comfort from adopting the folk wisdom that “no news is good news.” Processing bad news is hard work, and we just don’t have the cognitive resources to crunch through endless cycles of catastrophic news. If the bad news affirms our existing beliefs, it makes us even madder than what we were. If it runs counter to our beliefs, it forces us to spin up our sensemaking mechanisms and reframe our view of reality. Either way, there are way more fun things to do.

A recent study from the University of Chicago attempted to pinpoint when children started avoid bad news. The research team found that while young children don’t tend to put boundaries around their curiosity, as they age they start avoiding information that challenges their beliefs or their own well-being. The threshold seems to be about 6 years old. Before that, children are actively seeking information of all kinds (as any parent barraged by never ending “Whys” can tell you). After that, chidren start strategizing the types of information they pay attention to.

Now, like everything about humans, curiosity tends to be an individual thing. Some of us are highly curious and some of us avoid seeking new information religiously. But even if we are a curious sort, we may pick and choose what we’re curious about. We may find “safe zones” where we let our curiosity out to play. If things look too menacing, we may protect ourselves by curbing our curiosity.

The unfortunate part of this is that curiosity, in all its forms, is almost always a good thing for humans (even if it can prove fatal to cats).

The more curious we are, the better tied we are to reality. The lens we use to parse the world is something called a sense-making loop. I’ve often referred to this in the past. It’s a processing loop that compares what we experience with what we believe, referred to as our “frame”. For the curious, this frame is often updated to match what we experience. For the incurious, the frame is held on to stubbornly, often by ignoring new information or bending information to conform to their beliefs. A curious brain is a brain primed to grow and adapt. An incurious brain is one that is stagnant and inflexible. That’s why the father of modern-day psychology, William James, called curiosity “the impulse towards better cognition.”

When we think about the world we want, curiosity is a key factor in defining it. Curiosity keeps us moving forward. The lack of curiosity locks us in place or even pushes us backwards, causing the world to regress to a more savage and brutal place. Writers of dystopian fiction knew this. That’s why authors including H.G. Wells, Aldous Huxley, Ray Bradbury and George Orwell all made a lack of curiosity a key part of their bleak future worlds. Our current lack of curiosity is driving our world in the same dangerous direction.

For all these reasons, it’s essential that we stay curious, even if it’s becoming increasingly uncomfortable.