(This is my annual look back at what the MediaPost Media Insiders were talking about in the last year.)

Last year at this time I took a look back at what we Media Insiders had written about over the previous 12 months. Given that 2024 was such a tumultuous year, I thought it would be interesting to do it again and see if that was mirrored in our posts.

Spoiler alert: It was.

If MediaPost had such a thing as an elder’s council, the Media Insiders would be it. We have all been writing for MediaPost for a long, long time. As I mentioned, my last post was my 1000th for MediaPost. Cory Treffiletti has actually surpassed my total, with 1,154 posts. Dave Morgan has written 700. Kaila Colbin has 586 posts to her credit. Steven Rosenbaum has penned 371, and Maarteen Albarda has 367. Collectively, that is well over 4,000 posts.

I believe we bring a unique perspective to the world of media and marketing and — I hope — a little gravitas. We have collectively been around several blocks numerous times and have been doing this pretty much as long as there has been a digital marketing industry. We have seen a lot of things come and go. Given all that, it’s probably worth paying at least a little bit of attention to what is on our collective minds. So here, in a Media Insider meta analysis, is 2024 in review.

I tried to group our posts in four broad thematic buckets and tally up the posts that fell in each. Let’s do them in reverse order.

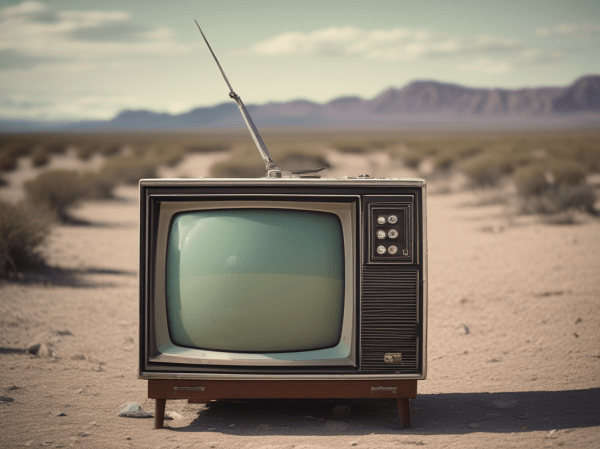

Media

Technically, we’re supposed to write on media, which, I admit, is a very vaguely defined category. It could probably be applied to almost everything we wrote, in one way or the other. But if we’re going to be sticklers about it, very few of our posts were actually about media. I only counted 12, the majority of these about TV or movies. There were a couple of posts about music as well.

If you define media as a “box,” we were definitely thinking outside of it.

It Takes a Village

This next category is more in the “Big Picture” category we Media Insiders seem to gravitate toward. It goes to how we humans define community, gather in groups and find our own places in the world. In 2024 we wrote 59 posts that I placed in this category.

Almost half of these posts looked at the role of markets in in our world and how the rules of engagement for consumers in those markets are evolving. We also looked at how we seek information, communicate with each other and process the world through our own eyes.

The Business of Marketing

All of us Media Insiders either are or were marketers, so it makes sense that marketing is still top of mind for us. We wrote 80 posts about the business of marketing. The three most popular topics were — in order — buying media, the evolving role of the agency, and marketing metrics. We also wrote about advertising technology platforms, branding and revenue models. Even my old wheelhouse of search was touched on a few times last year.

Existential Threats

The most popular topic was not surprising, given that it does reflect the troubled nature of the world we live in. Fully 40% of the posts we wrote — 99 in total — were about something that threatens our future as humans.

The number-one topic, as it was last year, was artificial intelligence. There is a caveat here. Not all the posts were about AI as a threat. Some looked at the potential benefits. But the vast majority of our posts were rather doomy and gloomy in their outlook.

While AI topped the list of things we wrote about in 2024, it was followed closely by two other topics that also gave us grief: the death knell of democracy, and the scourge of social media.

The angst about the decay of democracy is not surprising, given that the U.S. has just gone through a WTF election cycle. It’s also clear that we collectively feel that social media must be reined in. Not one of our 28 posts on social media had anything positive to say.

As if those three threats weren’t enough, we also touched briefly on climate change, the wars raging in Ukraine and the Middle East, and the disappearance of personal privacy.

Looking Forward

What about 2025? Will we be any more positive in the coming year? I doubt it. But it’s interesting to note that the three biggest worries we had last year were all monsters of our own making. AI, the erosion of democracy, and the toxic nature of social media all are things which are squarely within our purview. Even if these things are not created by media and marketing, they certainly share the same ecosystem. And, as I said in my 1000th post, if we built these things, we can also fix them.