Brandt Dainow at Think Metrics shared the results of his campaign performance with Google Adwords and came up with the following conclusions:

- There is no relationship between the position of an advertisement in the Google Ad listings and the chance of that ad being clicked on.

- Bidding more per visitor in order to get a higher position will not get you more visitors.

- The number one position in the listings is not the best position.

- No ad position is any better than any other.

- The factor which has the most bearing on your chance of being clicked on is the text in your ad, not the ad’s position.

These conclusions were arrived at after analyzing the Google ads he ran this year. He says,

“while position in the listings used to be important, it is not anymore. People are more discriminating in their use of Google Ads than they used to be; they have learned to read the ads rather than just click the first one they see”

This runs directly counter to all the research we’ve done, and also that done by others, including Atlas one point. So I decided it was worth a deeper dive.

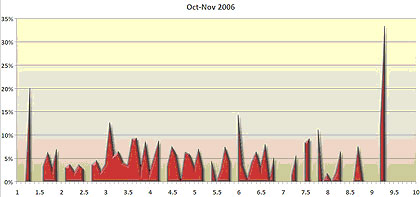

First, some facts about the analysis. It was done on ads he ran in October and November of last year, for the Christmas season. He acknowledges that this isn’t a definitive analysis, but the results are surprising enough that he encourages everyone to test their own campaigns.

In the following chart, he tracks the click through per position.

Brandt expected to see a chart that started high on the left, and tapered down as it moved to the right. But there seemed to be little correlation between position and click through. This runs counter to our eye tracking, which showed a strong correlation, primarily on first page visits. Top sponsored ads on Google received 2 to 3 times the click throughs.

Further, Atlas OnePoint did some analysis from their data set, and similarly found a fairly high correlation between position and click through on Google and Overture/Yahoo.

So why the difference?

Well, here are a couple thoughts right off the bat. Dainow’s data is exclusively for his campaigns. We don’t see click through rates for the other listings, both paid and non-paid, on the page, so we can’t see how his ads stack up against others on the page. Also, it may be that for the campaigns in question, Brandt’s creative is more relevant than the other ads that show. He makes the point that creative is more important than position. I don’t necessarily agree completely. The two work together. The odds of being seen are substantially higher in the top spots, and your creative doesn’t work if it isn’t seen. The discriminating searcher that Dainow sees emerging who takes the time to read all the ads isn’t the searcher we see in eye tracking tests. That searcher quickly scans 3 to 4 listings, usually top sponsored and the top 1 or 2 organic listings and then makes their choice. This is not only true of our study, but the recent Microsoft one that just came out. Although Dainow’s charts over time certainly seem to show that position is less important, there could be a number of other factors contributing to this.

I will agree with Brandt though that if seen, relevant and compelling copy does make a huge difference in the click through rate of the ad. And for consumer researchers in particular, I still see search advertiser’s cranking out copy that’s not aligned to intent. But all the evidence I’ve seen points to much higher visibility, and hence, click throughs, in the top sponsored spots.

When looking at analysis like Brandt Dainow is presenting, you have to be aware of all the variables. In this case, I’d really like to know the following:

- What were the keywords that made up the campaigns

- What was the creative that was running for his clients

- What was the creative the competition was running

- What were the overall click throughs for the page

In doing the analysis, you really need to control for these variables before you can make valid conclusions. Some are ones we can know, others, like the overall click throughs, only the engines would know.

But Dainow is quick to point that his findings show the need for individual testing on a campaign by campaign basis. And in that, we’re in complete agreement. Our eye tracking tests and other research shows general patterns over common searches, and the patterns have been surprisingly consistent from study to study. It probably gives us as good idea as any what typical searcher behavior might be. But as I’ve said before, there is no such thing as typical behavior. Look at enough searches and an average, aggregate pattern emerges, but each search is different. It depends on searcher intent, it depends on the results and what shows on the page, it depends on the engines, it depends on what searchers find on the other side of the click. All these things can dramatically affect a scan pattern. So while you might look to our studies or others as a starting point, we continually encourage you to use our findings to set up your own testing frameworks. Don’t take anything for granted. But that’s a message that often doesn’t get through. And my concern is that advertisers looking for a magic bullet will read Dainow’s conclusions highlighted at the top of this post and swallow them whole, without bothering to digest them. And there’s still far too many question marks about this analysis for anyone to do that. I’ve contacted Dainow to set up a chat so I can find out more. Hopefully we can shed more light on this question.